Have you created an automation script for Continuous Delivery to Public Cloud Platforms?

Have you ever lost some important data in a local disk crash?

No-one likes to face such circumstances. So it’s better to deliver our application on the cloud and be relaxed by integrating automated provisioning.

To do that many ways are possible, one way is to choose your desired cloud platform to be it AWS, GCP, Azure or any platform you are comfortable to work in and then select your desired generic machine image like here we’ll. be talking about AWS so it’s AMI(Amazon Machine Image) in use, and then we create an instance, specifying our required configurations for the application to work properly.

This sounds quite an easy task but consumes a manual intervention of the developer so I came with this blog which will help you understand the automation of infrastructure establishment with famous tools such as Packer by HashiCorp, Terraform by HashiCorp and Ansible by RedHat.

The only prerequisites for continuous delivery of your applications using the below-mentioned tools are the knowledge about the usage of all of them.

The Technology Stack which is used to perform the continuous delivery are as follows-

1. GitHub– For our source code management also it’s handling the continuous integration section with the help of Github Packages.

2. AWS console– For providing a platform to fulfill the needs of continuous delivery.

3. Packer- For creating the automated AMI.

4. Ansible- For configuring the automated AMI as baked by Packer and helps in provisioning of the docker image of our application.

5. Terraform- For creating an automated EC2 instance.

PACKER

This is a configuration management tool that is used to handle the IaaS field. It is becoming popular because of the dependency of remote deployments of services and their access over a network to the consumers via Cloud or containers techniques. The biggest problem to infrastructure or pre-written images is that they are written by the development team and thus the operations team is not too flexible to make changes to them due to a high learning curve. Moreover, it is difficult to automate infrastructure using pre-written images.

In short, the user can create their desired AMI. An Amazon Machine Image (AMI) provides the information needed to launch an instance. You must specify an AMI when you launch an instance.

FEATURES

* Helpful in creating identical machine images for multiple platforms from a single source configuration. It automates the creation of any type of machine image.

* Multi-provider portability- Packer creates identical images that can be deployed on public cloud (AWS, GCP, Azure, etc), private cloud (OpenStack, etc.), or local desktop machines (Virtual Box, VMWare). Created identical images for all platforms

* Fast- The entire infrastructure is configured and provisioned in seconds.

* Improved Stability- configure dependency on image creation rather than the configuration of infrastructure.

Here Packer is used for continuous delivery on AWS with the creation of a portable lightweight image configured with Ansible for the continuous management and provisioning of the software in the docker image of our application

ANSIBLE

The overall functionality of Ansible is to take the responsibility of the management and provisioning of the servers in a fully automated environment. Integrating Ansible in-order to automate your applications in AWS is very promising for the overall success of cloud initiative. Its capability enables IT organizations to dynamically provision entire workloads like never before. It is the simple solution for configuration management available and is designed to be minimal, consistent, secure, and highly reliable, with an extremely low learning curve for any technician. Ansible is different from other provisioning tolls because it needs just a password or SSH key to start managing systems and can start managing them without installing any agent software, this indeed solves the problem of “managing the management” which is very customary in many automation systems.

FEATURES

* Secure and agentless because it does not require root login privileges, specific SSH keys, or dedicated users and tends to respects the security model of the system under management. It depends on the secure purely on the remote configuration management conveniently with its default transport layer: OpenSSH. It’s available to multiple platforms. The security patches if occur can easily be discovered and recovered. It doesn’t depend on remote agents and performs what is expected out of application with desired configurations.

* Goal-oriented, not scripted- It is based on a state-driven resource model, ideally used for describing the desired states of the systems rather than the paths to reach the desired state. It will change the state of the system completely into the desired state when the primary goal is to be achieved. This reflects on the point that Ansible’s only aim is to achieve the end goal without the dependency on the traditional script-based approach.

* Beyond just Servers- Not just servers but Ansible is capable of working with networks, load balancers, web services, monitoring systems, and other devices requiring the need for continuous updates automatically.

Ansible in our demo practical pulls a docker image of an example application called Java accelerator.

TERRAFORM

Its. a powerful tool for building, modifying, and versioning infrastructure securely and proficiently. It’s capable enough to manage the existing and in-demand service providers along with custom in house solutions. It generates an implementation plan mentioning way efficient ways to reach the desired conditions, further leading to build the required infrastructure. It handles the configuration changes automatically and recreates the plan accordingly that is adaptable to the changes.

FEATURES

* Infrastructure as a code- Usually infrastructure is considered to be a high-level configuration for any application. With terraform, a blueprint of the datacenter has versioned the code it provides and can be reused and shared for similar requirements.

* Resource Graph- It created a graph of all the needed resources and declines all the unnecessary resources, thereby becoming very powerful and efficient.

* Change Automation- It provides the addons of including the complex changesets automatically or with minimal human intervention thereby avoiding the possibility of human errors.

Terraform creates a script to build an instance that is actually like an infrastructure. It uses the unique ami-id generated by the Packer after the successful creation of the newly configured AMI.

Lets automate the continuous deployment workflow using the following steps:

- Upload script files to GitHub

- Setup IAM account and create Access and Secret Access keys

- Setup AWS CLI on the local workstation

- Create Cloud Image using Packer

- Verify the creation of EBS Snapshot and AWS AMI on EC2 dashboard.

- Modify Terraform Script with AMI ID created

- Run Terraform commands to launch EC2 instance

- Verify EC2 instance creation on the EC2 dashboard.

1. Pushing the script files to GitHub

Read and use the Packer script, Ansible Playbook and HCL Terraform scripts from this GitHub repository.

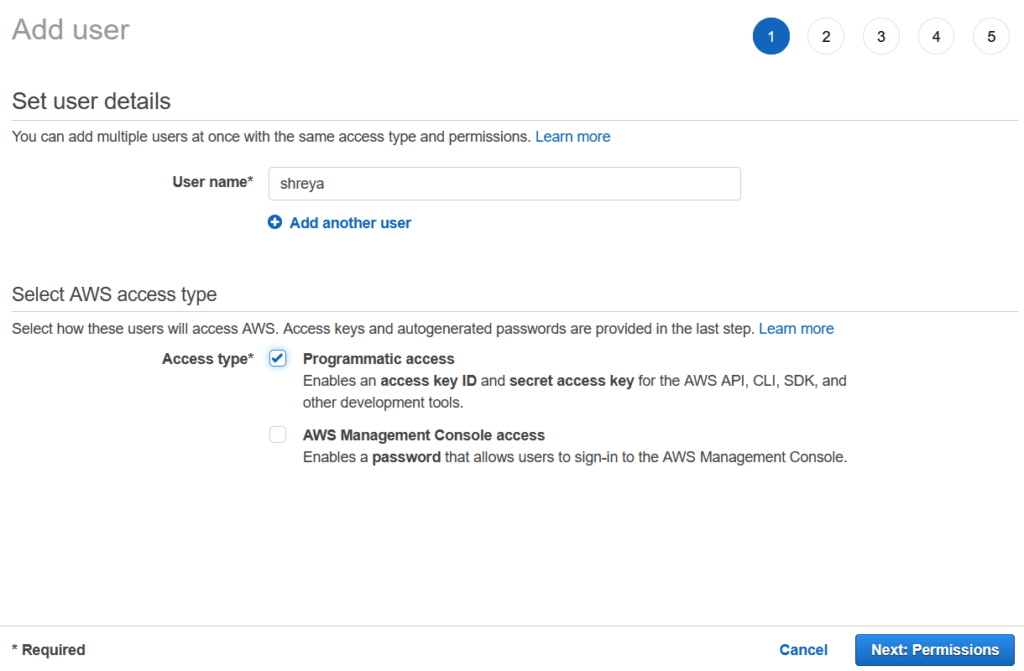

2. Create IAM user and generate access keys.

3. Setup AWS CLI on the local workstation

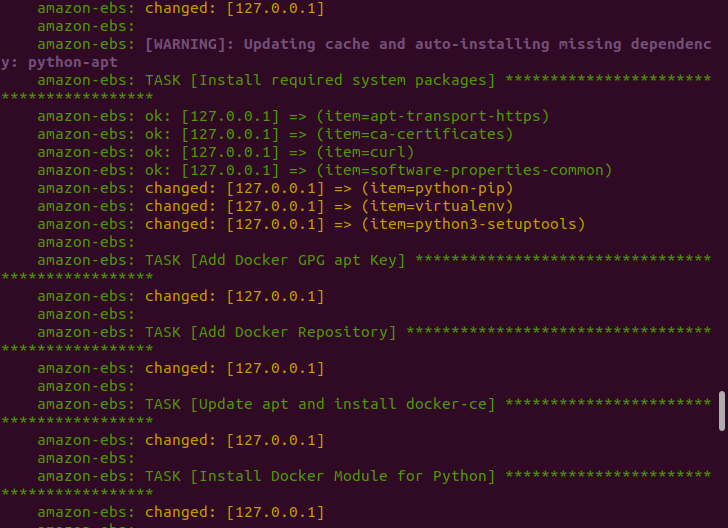

4. Run Packer Script to create Cloud Image

5. Verify EBS Snapshot and AWS AMI creation on the EC2 Dashboard.

6. Modify the Terraform script with AMI ID generated

7. Run Terraform commands to launch the EC2 instance

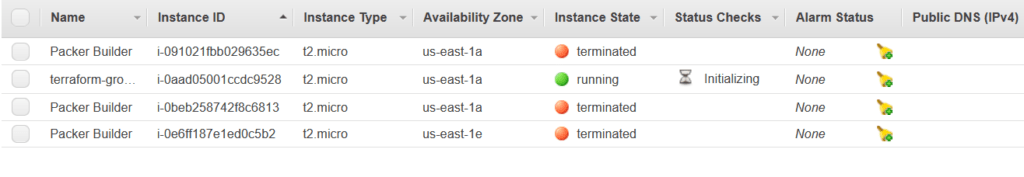

8. Verify EC2 instance successful creation on AWS EC2 dashboard.

Add Comment

You must be logged in to post a comment.

VENKATESH AGARWAL

Such a descriptive and informative blog. Looking forward to read more of such good content.

Shreya Singh

Thank you.

I am working to deliver more of such informative writings.

Yash Sharma

Great content!

Saif Rizvi

This is really helpful as you explained about Cloud in such a simple way unlike other online sites which make these technology terms very difficult to understand.Great Job?

Rohit Rawat

Informative!!

Shreya Singh

Thank you.

Shreya Singh

Thanks.

Expect more blogs on the cloud domain.

Vasundhara Paneru

This is amazing..

Vasundhara Paneru

Quite Informative..