GPT4-VISION : Busy Person’s guide.

GPT4-VISION also called GPT-4V or gpt-4-vision-preview is one of the leading models for any visual question-answering tasks. We can pass it multiple images and ask questions about those images. It comes with a proprietary license and is one of the gpt series models released by Open-ai.

For a very long time, users were constrained with one input type i.e. text but gpt-4v brings vision i.e. images as a supported input type. Currently as of today , only the chat completions API on open-ai supports image inputs not the Assistants API.

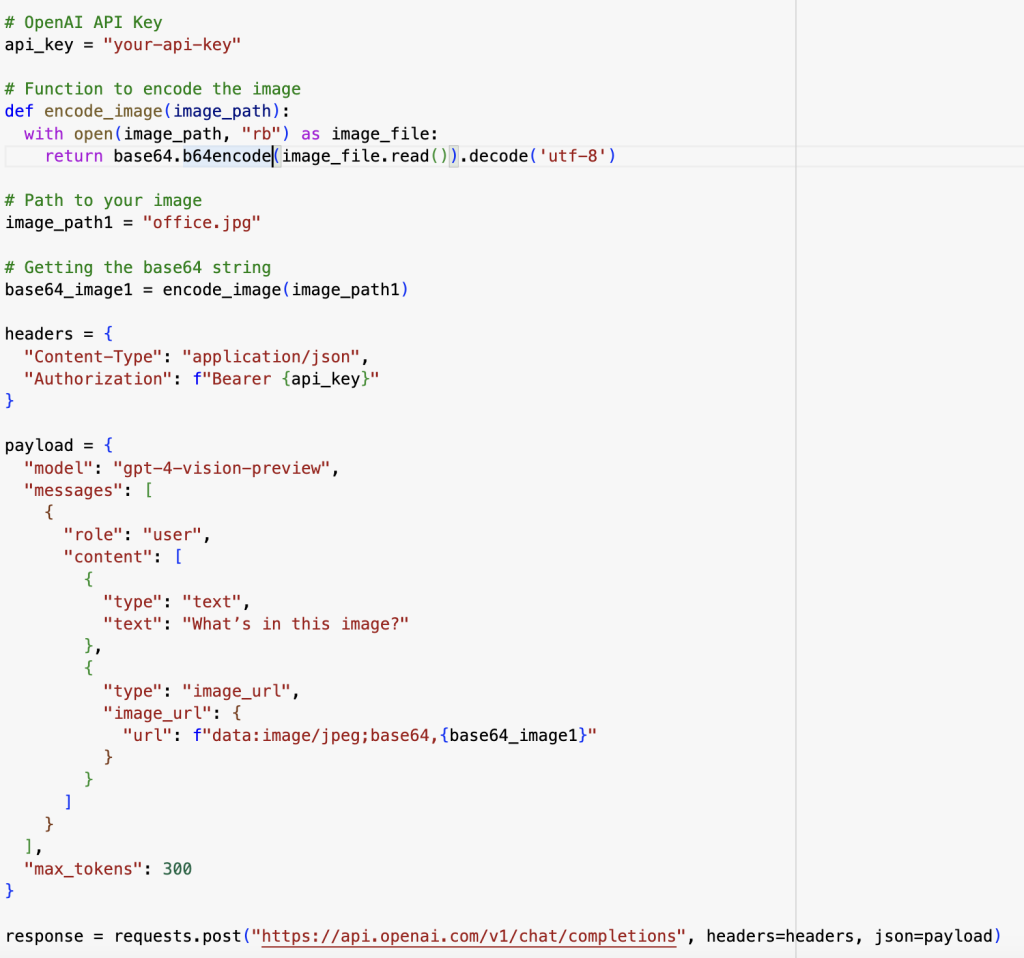

Let’s delve right into the code and see how the API works.

You can get your api-key from open-ai profile setting page. Link: https://platform.openai.com/

In the first encode_image function we are converting the image into base64 encoding, the api-key is being passed as a bearer token. In the payload, we can see the model parameter set to gpt4-vision-preview. Because the role set is user, it means the user is inputting two types of data format, the first one being text (“What’s in this image ? “) and an image where we have our base64 encoded image. The max token 300 is the output response restriction on the number of tokens to be generated. We are finally hitting our chat/completions API with the set headers and payload and we get the output to our asked question.

Example :

1) Image input :

Output response :

“The image shows three people working at a modern office environment. They are seated at desks equipped with computers; the woman on the left is using an Apple iMac, and the two men are using Dell monitors, possibly with desktop computers or docked laptops. The individuals are focused on their screens and appear to be engaged in their tasks.

The workspace has a contemporary feel, with a large window in the background offering a view of greenery outside, suggesting a pleasant work environment that might promote well-being. Papers, pens, and other work-related items can be seen on the desks, indicating active use. The overall impression is of a professional setting with employees absorbed in their work.”

Quite good right but it does hallucinate at times. The evaluation benchmarks do are shown quite high by the companies but only after testing it in real data conditions we understand the depth of performance. But overall, the gpt-4v is the most preferred choice of developers for any VQA tasks. Though Google’s Gemini has entered the market with a catchy promo video, GPT-4v still holds the preference over it now.

But only time will tell, WHO WILL REACH AGI FIRST ????

Official document link :