Exploring Ab Initio Architecture: Unveiling the Power of Enterprise Data Integration

Introduction

Ab Initio is a leading software suite renowned for its ability to handle data integration, analysis, and batch processing in enterprise environments. In this comprehensive guide, we will delve into the intricate details of Ab Initio’s architecture, unraveling its components and functionalities. By understanding the underlying architecture, businesses can harness the full potential of Ab Initio to process large volumes of data, ensure data quality, and derive valuable insights.

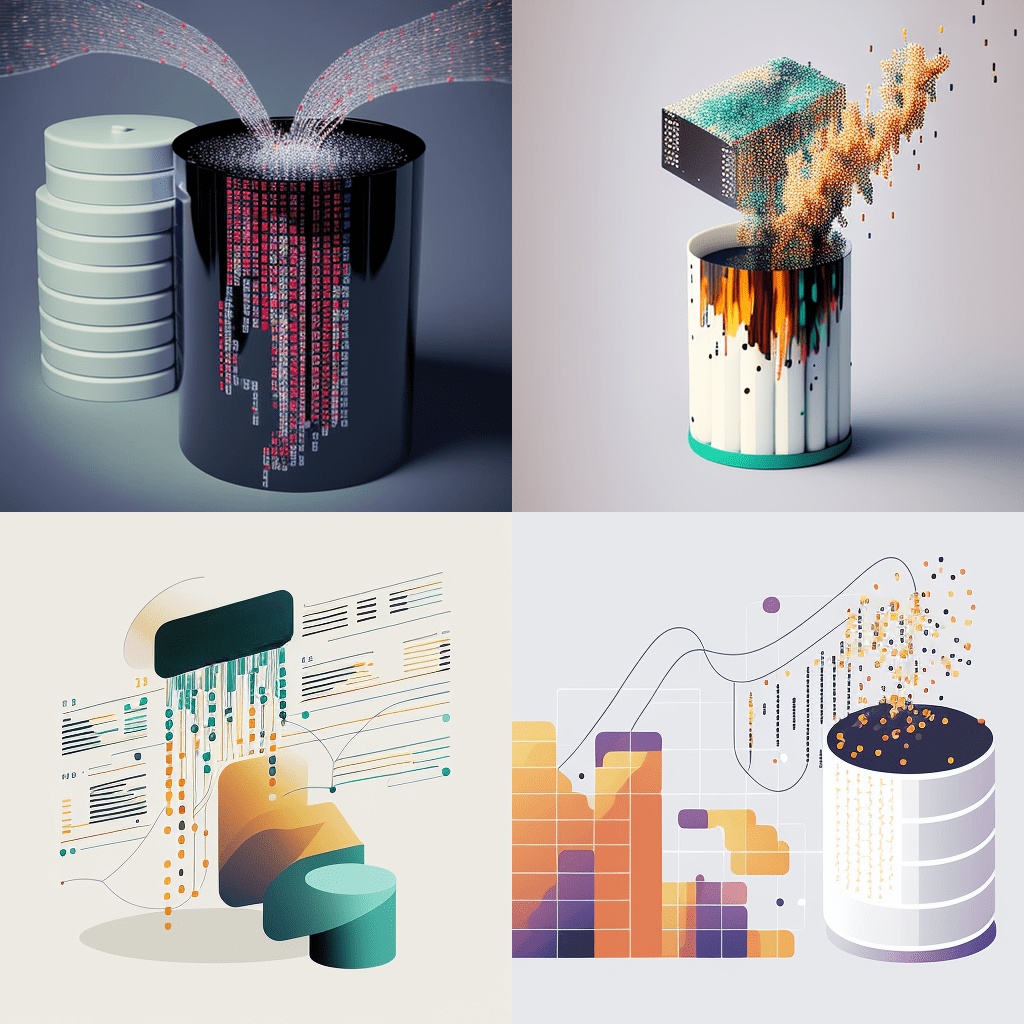

Component-Based Architecture

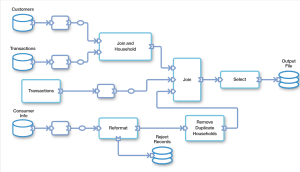

Ab Initio employs a component-based architecture that enables users to design and develop data integration workflows efficiently. The architecture comprises several key components:

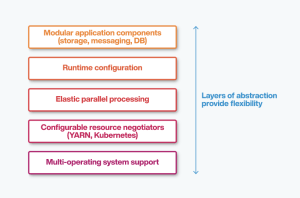

a) Co-Operating System: The Co-Operating System acts as the foundation of Ab Initio, providing a robust runtime environment for executing data integration processes. It manages parallel processing, resource allocation, and communication between components.

b) Graphical Development Environment (GDE): The GDE serves as the visual interface for designing data integration workflows. It allows users to create graphs by connecting various components and defining data sources, transformations, and destinations.

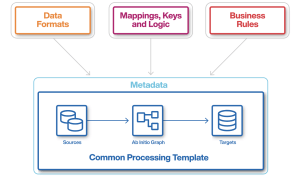

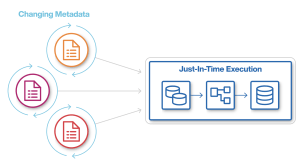

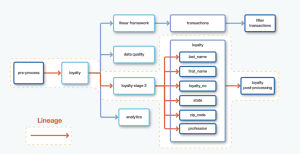

c) Enterprise Meta-Environment (EME): The EME serves as a centralized repository for storing metadata related to data integration processes. It facilitates collaboration, version control, and lineage tracking.

d) Data Manipulation Language (DML): The DML is a specialized language used to define transformations and manipulate data within Ab Initio. It provides a powerful set of functions and operators for data manipulation and transformation.

e) Conduct-It: Conduct-It is the component responsible for executing and monitoring data integration workflows. It provides scheduling capabilities, error handling, and logging functionalities.

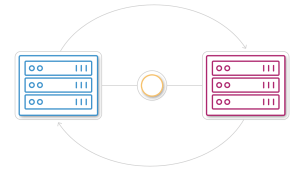

Parallel Processing

Ab Initio’s architecture is designed to leverage parallel processing, enabling efficient handling of large data volumes. The software divides processing tasks into smaller units, known as partitions, and distributes them across multiple machines or processors. This parallelism improves performance, scalability, and throughput.

Ab Initio employs data parallelism and component parallelism to achieve parallel processing. Data parallelism involves dividing data into smaller subsets and processing them concurrently. Component parallelism, on the other hand, focuses on executing independent components simultaneously, optimizing resource utilization.

Parallel processing in Ab Initio is facilitated by the Co-Operating System, which manages task distribution, load balancing, and inter-component communication. It dynamically allocates resources based on system capabilities and workload demands, ensuring optimal performance.

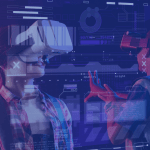

Data Quality and Governance

Ab Initio emphasizes data quality and governance to ensure accurate and reliable processing of data. The architecture incorporates various mechanisms for data validation, cleansing, and enrichment.

a) Data Profiling: Ab Initio enables data profiling to analyze and understand the characteristics of data sources. It assesses data quality, identifies anomalies, and provides insights to improve data integration processes.

b) Data Cleansing: The software offers data cleansing capabilities, allowing users to identify and rectify inconsistencies, duplicates, and errors within datasets. By cleansing the data, organizations can improve data quality and integrity.

c) Data Validation: Ab Initio supports data validation through predefined rules, custom validations, and external data references. It ensures that processed data adheres to specified standards, business rules, and regulatory requirements.

d) Metadata Management: Ab Initio’s architecture incorporates metadata management capabilities through the Enterprise Meta-Environment (EME). The EME serves as a central repository for storing metadata, including data lineage, data definitions, and transformation rules. This facilitates data governance, collaboration, and compliance.

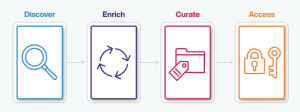

Scalability and Flexibility

Ab Initio’s architecture offers scalability and flexibility to accommodate evolving business needs and varying data volumes.

a) Horizontal Scalability: Ab Initio can scale horizontally by adding more machines or processors to distribute processing tasks. This ensures that the system can handle increasing data volumes without compromising performance.

b) Connectivity and Integration: Ab Initio supports integration with a wide range of data sources, including databases, files, web services, and more. It allows seamless connectivity to extract data from diverse systems, ensuring flexibility and adaptability.

c) Extensibility: Ab Initio’s architecture is designed to be extensible, enabling users to incorporate custom components and functionalities as per their requirements. This flexibility allows organizations to tailor Ab Initio to specific use cases and integrate with existing systems.

Conclusion

Ab Initio’s architecture provides a robust foundation for enterprise data integration, offering a component-based approach, parallel processing capabilities, data quality mechanisms, and scalability. By understanding the intricacies of Ab Initio’s architecture, businesses can optimize their data integration processes, enhance data quality, and derive valuable insights. With its versatile and flexible design, Ab Initio empowers organizations to tackle the challenges of handling large volumes of data and unlock the full potential of their data assets.

Image Source: https://www.abinitio.com/en/architecture/

Checkout my Interviews at “Professionals Unplugged”

Add Comment

You must be logged in to post a comment.