AI in Robotics: Enabling Autonomy, Ethics, and Future Trends

Introduction

In the realm of technological innovation, the integration of artificial intelligence (AI) into robotics marks a pivotal advancement with profound implications. This integration involves embedding AI algorithms and machine learning capabilities into robotic systems, enabling them to perceive, learn, and make intelligent decisions autonomously. The significance of this union lies in its transformative impact across various industries, ranging from manufacturing and healthcare to autonomous vehicles and beyond. By imbuing robots with AI, we empower them to adapt to dynamic environments, handle complex tasks, and interact seamlessly with humans. This symbiotic relationship between AI and robotics not only enhances efficiency and productivity but also opens new frontiers in automation, paving the way for a future where intelligent machines play a crucial role in shaping our daily lives and industries.

The evolution of artificial intelligence (AI) and robotics has undergone a remarkable journey, marked by continuous innovation and mutual influence. In the early stages, AI focused on rule-based systems and symbolic reasoning, attempting to replicate human cognitive processes. Concurrently, robotics primarily dealt with mechanization and automation of physical tasks. However, the convergence of AI and robotics gained momentum as both fields matured. The advent of machine learning, particularly deep learning, revolutionized AI by enabling systems to learn from data and make decisions without explicit programming. In parallel, robotics witnessed advancements in sensor technologies, actuators, and feedback systems, enhancing the physical capabilities of machines. The synergy between AI and robotics became evident with the development of intelligent robotic systems capable of learning from their environment and adapting to unforeseen challenges. This convergence not only propelled the capabilities of autonomous robots but also fostered interdisciplinary collaborations, giving rise to a new era where intelligent machines seamlessly blend cognitive and physical functionalities. Today, the evolution of AI and robotics continues to be intertwined, with each field influencing and shaping the trajectory of the other, promising a future where intelligent robotic systems play pivotal roles in diverse applications.

Foundations of AI in Robotics

Fundamental concepts of artificial intelligence (AI) that find application in robotics reveal the bedrock principles shaping the synergy between these two domains. AI, in the context of robotics, encompasses a spectrum of crucial elements.

- AI Fundamentals: Uncover the foundational concepts of artificial intelligence (AI) that directly apply to robotics. This involves a comprehensive examination of key principles shaping the integration of AI into robotic systems.

- The statement “AI Fundamentals” urges us to thoroughly investigate the foundational concepts of artificial intelligence (AI) that hold direct relevance and application within the field of robotics. It requires us to comprehensively examine the key principles that shape the integration of AI into robotic systems. Let’s delve deeper into this concept:

- Artificial Intelligence (AI): AI, a branch of computer science, focuses on creating systems capable of performing tasks that typically require human intelligence. These tasks encompass problem-solving, decision-making, learning, and perception. In the context of robotics, AI plays a vital role in enabling machines to mimic human-like cognitive functions.

- AI Fundamentals: These constitute the core principles and techniques underpinning the development of AI systems. They include:

- Machine Learning

- A subset of AI, machine learning involves training algorithms to learn from data and make predictions or decisions. In robotics, this capability enables machines to adapt to changing environments and learn from their experiences.

- Computer Vision

- Computer vision empowers machines, including robots, to interpret and comprehend visual information from their surroundings. This capability is critical for tasks such as object recognition and navigation.

- Natural Language Processing (NLP)

- NLP focuses on enabling machines to understand and interact with human language. In robotics, this facilitates communication between robots and humans, enhancing user-friendliness and versatility.

- Decision-Making Algorithms

- These algorithms are essential for robots to make choices and decisions based on input data. They empower robots to respond to different situations and adjust their behavior accordingly.

- Neural Networks

- Neural networks, a type of machine learning algorithm inspired by the human brain, excel in tasks involving pattern recognition and complex data analysis.

- Machine Learning

- Integration into Robotics: Robotics, the field dealing with the design, construction, operation, and utilization of robots, benefits significantly from the integration of AI fundamentals. This integration equips robots with intelligence, enabling them to perform tasks demanding decision-making, perception, and adaptation.

- Comprehensive Examination: AI fundamentals in the context of robotics entails a thorough investigation of these concepts. It encompasses understanding how machine learning models can be trained for specific tasks, how computer vision enables robots to “see” and interpret their environment, and how NLP facilitates human-robot communication.

- Key Principles: This helps us identify and understand the key principles governing the synergy between AI and robotics. These principles guide the development of AI-driven robotic systems, ensuring their effectiveness, safety, and capability to fulfill their intended functions.

- Applications: The integration of AI fundamentals into robotics has a wide range of applications, spanning from manufacturing and healthcare to space exploration and autonomous vehicles. It empowers robots to operate autonomously, adapt to dynamic environments, and interact with humans in more natural ways.

- “AI Fundamentals” in the context of robotics involves the foundational concepts of artificial intelligence directly relevant to robotic systems. This not only enhances our understanding of AI’s role in robotics but also guides the development of intelligent and capable robots capable of performing tasks previously deemed challenging or impossible. It represents a crucial step in advancing the capabilities and applications of AI-powered robotics.

- The statement “AI Fundamentals” urges us to thoroughly investigate the foundational concepts of artificial intelligence (AI) that hold direct relevance and application within the field of robotics. It requires us to comprehensively examine the key principles that shape the integration of AI into robotic systems. Let’s delve deeper into this concept:

- Embrace Machine Learning: Emphasize the role of machine learning algorithms, such as neural networks, in robotics. These algorithms empower robots to acquire knowledge from data, fostering continuous improvement in their performance.

- “Embrace Machine Learning” emphasizes the importance of incorporating machine learning algorithms, especially neural networks, into the field of robotics. It underscores how these algorithms play a crucial role in enabling robots to acquire knowledge from data, thereby fostering continuous improvement in their performance. Let’s delve deeper into this concept.

- Machine Learning Algorithms

- Machine learning, a subset of artificial intelligence, focuses on developing algorithms and models that allow systems, including robots, to learn and make predictions or decisions based on data. These algorithms can range from simple linear regressions to complex neural networks.

- Neural Networks

- Neural networks, a class of machine learning algorithms inspired by the structure and function of the human brain, consist of interconnected nodes, or artificial neurons, organized into layers. Neural networks excel at handling complex, high-dimensional data, making them well-suited for various robotic applications.

- Acquiring Knowledge from Data

- Machine learning algorithms, including neural networks, empower robots to learn from the data they collect. Instead of relying solely on pre-programmed instructions, robots can adapt and improve their performance by analyzing data and adjusting their behavior accordingly.

- Continuous Improvement

- The integration of machine learning in robotics supports continuous improvement. As robots gather more data and learn from their experiences, they can refine their abilities over time. This adaptability allows them to handle a broader range of tasks and perform with increasing efficiency and accuracy.

- Performance Enhancement

- Machine learning enables robots to enhance their performance in various ways. They can optimize their movements, recognize objects and patterns, make better decisions in dynamic environments, and even predict future events based on historical data.

- Versatility

- Machine learning algorithms, particularly neural networks, are versatile and applicable to various robotic tasks. This includes object recognition, natural language processing, autonomous navigation, and even fine motor skills like grasping objects with dexterity.

- Data-Driven Decision-Making

- By embracing machine learning, robots transition from rule-based systems to data-driven decision-makers. They can generalize from data, adapt to new situations, and improve their performance in unstructured and evolving environments.

- Applications

- Machine learning in robotics has far-reaching applications, from self-driving cars and industrial automation to healthcare robotics and personal assistants. In each of these domains, robots can use machine learning to enhance their capabilities and deliver more effective and efficient results.

- Machine Learning Algorithms

- “Machine Learning Algorithm” in robotics signifies the pivotal role, particularly neural networks, play in enabling robots to learn from data and continuously improve their performance. This adaptability and data-driven approach make robots more versatile, capable of handling a wide range of tasks and well-suited for applications in diverse fields. It represents a significant advancement in the field of robotics and AI, promising smarter and more capable robotic systems.

- “Embrace Machine Learning” emphasizes the importance of incorporating machine learning algorithms, especially neural networks, into the field of robotics. It underscores how these algorithms play a crucial role in enabling robots to acquire knowledge from data, thereby fostering continuous improvement in their performance. Let’s delve deeper into this concept.

- Harness Computer Vision: Highlight the significance of computer vision in the AI-robotics interplay. This technology enables robots to interpret and understand visual information, enhancing their perceptual capabilities and facilitating interaction with their environment.

- The statement emphasizes the importance of computer vision in the relationship between artificial intelligence (AI) and robotics. It highlights how this technology plays a critical role in enabling robots to interpret and understand visual information, ultimately enhancing their perceptual capabilities and facilitating interaction with their environment. Let’s explore this concept in more depth:

- Computer Vision

- Computer vision, a branch of artificial intelligence, teaches computers, including robots, to interpret and understand visual information from the world around them. It involves processing and analyzing images and videos to extract meaningful data.

- AI-Robotics Interplay

- This phrase underscores the synergistic relationship between AI and robotics. AI provides robots with the ability to learn, adapt, and make decisions, while robotics offers the physical platform for AI to interact with the physical world. Computer vision serves as a vital bridge between these two domains.

- Interpreting and Understanding Visual Information

- Computer vision equips robots with the ability to “see” and interpret visual data. This includes recognizing objects, understanding their spatial relationships, and identifying patterns or anomalies in visual input.

- Enhancing Perceptual Capabilities

- By integrating computer vision, robots can perceive and make sense of their surroundings like humans. This capability enables them to gather crucial information from their environment, such as identifying objects, people, obstacles, or even reading text.

- Facilitating Interaction with the Environment

- The ability to understand visual information is fundamental for robots to interact effectively with their environment. It enables them to navigate safely by avoiding obstacles, locating and manipulating objects, and even understanding and responding to human gestures and expressions.

- Real-Time Decision-Making

- Computer vision provides robots with real-time data about their environment, allowing them to make informed decisions on the fly. For example, a robot equipped with computer vision can adjust its path when it detects an unexpected obstacle or pick up and manipulate objects based on visual cues.

- Applications

- The significance of computer vision extends to a wide range of applications, from autonomous vehicles and manufacturing robots to healthcare and surveillance systems. It empowers robots to perform tasks that require visual perception, making them more versatile and adaptable.

- Computer Vision

- “Computer Vision” in AI-robotics interplay is pivotal because it allows robots to perceive and interpret visual information actively. This enhancement of their perceptual capabilities enables them to interact effectively with their surroundings, expanding the potential applications of robots and bringing them closer to achieving tasks that were once considered challenging or impossible without human intervention. It represents a fundamental building block in advancing the capabilities of AI-powered robots across various industries and domains.

- The statement emphasizes the importance of computer vision in the relationship between artificial intelligence (AI) and robotics. It highlights how this technology plays a critical role in enabling robots to interpret and understand visual information, ultimately enhancing their perceptual capabilities and facilitating interaction with their environment. Let’s explore this concept in more depth:

- Enable Intelligent Decision-Making: Shed light on decision-making algorithms within the realm of AI in robotics. These algorithms empower robots to make intelligent choices based on input data, enhancing their autonomy and adaptability in complex scenarios.

- The statement implies that it focuses on developing and understanding algorithms that allow robots to make smart choices within the realm of AI in robotics. Let’s break down this concept in detail:

- Decision-Making Algorithms

- These algorithms are sets of rules and computations that help machines, specifically robots, make choices or decisions. In the context of AI in robotics, these algorithms are crucial because they determine how a robot responds to various situations or inputs.

- AI in Robotics

- This refers to integrating artificial intelligence (AI) technologies into robots. AI enables robots to process data, learn from experiences, and make decisions, much like humans. This integration allows robots to perform tasks that require a level of intelligence and adaptability.

- Empowering Robots

- The goal here is to grant robots the ability to act independently and intelligently. Instead of relying on pre-programmed instructions for every possible scenario, robots equipped with advanced decision-making algorithms can analyze situations and choose the best course of action.

- Input Data

- Robots collect information from their surroundings through sensors, cameras, or other data sources. This data can include things like images, temperature readings, or other sensory inputs. The decision-making algorithms process this data to understand the robot’s environment.

- Enhancing Autonomy

- Autonomy in robotics means reducing human intervention and enabling robots to function more independently. Decision-making algorithms play a pivotal role in this by allowing robots to interpret data and make decisions without constant human guidance.

- Adaptability in Complex Scenarios

- Complex scenarios refers to situations that are not easily predictable and require nuanced decision-making. Robots with intelligent decision-making algorithms can adapt to these scenarios by analyzing data and selecting the most appropriate actions, even in situations they haven’t encountered before.

- Decision-Making Algorithms

- The statement highlights the importance of developing advanced decision-making algorithms in AI-powered robotics. These algorithms empower robots to process input data and make intelligent choices, ultimately increasing their autonomy and adaptability in a wide range of complex situations. This advancement is crucial for robotics as it enables robots to operate effectively in dynamic and unpredictable environments.

- The statement implies that it focuses on developing and understanding algorithms that allow robots to make smart choices within the realm of AI in robotics. Let’s break down this concept in detail:

- Empower Autonomous Operation: Fundamental AI concepts collectively empower robots to navigate intricate environments, interact with objects, and execute tasks autonomously. This autonomy is a key outcome of the integration of AI into robotics, marking a significant advancement in their capabilities.

- The statement emphasizes how fundamental AI concepts enable robots to operate independently in complex environments. Let’s delve deeper into this concept:

- Fundamental AI Concepts

- These foundational principles and techniques of artificial intelligence include machine learning, computer vision, natural language processing, and decision-making algorithms, among others. These concepts form the building blocks of AI technology.

- Empower Robots

- In this context, “empower” means giving robots the ability or authority to perform tasks independently. It implies enhancing their capabilities beyond simple pre-programmed tasks.

- Autonomous Operation

- Autonomous operation refers to a robot’s ability to function without constant human intervention. It involves the robot navigating its environment, interacting with objects, and executing tasks based on its understanding of the situation.

- Navigating Intricate Environments

- Robots equipped with AI can process data from sensors and cameras to navigate complex and dynamic environments. They can recognize obstacles, plan paths, and make real-time adjustments to avoid collisions or hazards.

- Interacting with Objects

- AI-powered robots can understand and manipulate objects in their surroundings. Computer vision allows them to recognize objects, and machine learning enables them to learn how to grasp and manipulate these objects effectively.

- Executing Tasks Autonomously

- The integration of AI concepts enables robots to perform a wide range of tasks independently. They can adapt to changing circumstances, troubleshoot issues, and make decisions in real time, all without human intervention.

- Significant Advancement

- Incorporating AI into robotics represents a significant leap in their capabilities. It surpasses traditional robotics, where robots are typically limited to repetitive and predefined tasks. With AI, robots can handle a broader array of tasks and operate in diverse, unstructured environments.

- Fundamental AI Concepts

- The statement underscores how the integration of fundamental AI concepts empowers robots to operate autonomously in intricate environments. This autonomy enables robots to navigate, interact, and execute tasks independently, marking a substantial advancement in their capabilities. AI-equipped robots can adapt to a variety of scenarios, making them valuable in applications ranging from manufacturing and logistics to healthcare and space exploration.

- The statement emphasizes how fundamental AI concepts enable robots to operate independently in complex environments. Let’s delve deeper into this concept:

Challenges in Integrating AI into Robotics

- Computational Complexity

- AI algorithms, particularly deep learning models, can demand substantial computational resources. Running these algorithms on resource-constrained robotic platforms can result in slow processing, increased power consumption, and potential overheating. Addressing this challenge necessitates the development of efficient hardware and software solutions.

- Hardware Limitations

- Many robots possess specific hardware configurations, and retrofitting them to accommodate AI capabilities can prove to be challenging and costly. Careful consideration of hardware compatibility is essential when integrating AI into existing robotic systems.

- Safety Concerns

- Ensuring the safety of AI-powered robots, especially in shared environments with humans, takes precedence. Safety concerns encompass not only physical safety (preventing collisions and accidents) but also ethical aspects of AI decision-making, including fairness, transparency, and accountability.

- Ethical Considerations

- As AI-powered robots gain autonomy and make decisions affecting humans, ethical considerations gain prominence. Ethical concerns may involve issues such as bias in AI algorithms, privacy infringements, or the consequences of AI-driven decision-making in critical domains like healthcare or autonomous vehicles.

- Data Dependency

- AI relies heavily on large, diverse datasets for training and operation. The collection, curation, and maintenance of such datasets can be expensive and may raise concerns about privacy and security.

- Interoperability

- Integrating AI into existing robotic systems can be intricate, particularly when dealing with legacy hardware or software. Achieving seamless interoperability between various components and systems remains an ongoing challenge.

Limitations

- Lack of Generalization

- Despite their capabilities, many AI models still struggle to generalize across diverse scenarios and environments. This limitation implies that robots might excel in controlled settings but encounter difficulties in unforeseen or unfamiliar situations.

- Limited Autonomy

- Despite progress in AI, most robots necessitate some level of human oversight or intervention, particularly in complex and unpredictable environments. Achieving full autonomy, where robots can independently handle a wide array of tasks, remains an ongoing aspiration.

- Energy Efficiency

- Running AI algorithms on battery-powered robots can be a significant constraint. Striking a balance between computational demands and energy efficiency is crucial to extending operational autonomy.

- Cost

- The development and deployment of AI-powered robots can be cost-prohibitive, constraining their adoption across various industries and applications. Reducing the cost of AI components and hardware is essential to enhance accessibility.

Potential Areas for Improvement

- Algorithm Efficiency

- Research into more efficient AI algorithms, model compression, and optimization techniques can substantially reduce computational complexity, making AI integration more feasible on resource-constrained robotic platforms.

- Safety Standards

- The development of industry-wide safety standards and certifications specific to AI-powered robots can ensure safe operation in diverse environments and enhance public trust.

- Ethical Frameworks:

- Establishing ethical frameworks, guidelines, and regulations can help mitigate ethical concerns associated with AI in robotics and ensure responsible AI usage.

- Data Augmentation

- Techniques such as data augmentation, transfer learning, and synthetic data generation can enhance a robot’s ability to generalize from limited training data, thereby improving adaptability.

- Human-Robot Collaboration

- Advancements in human-robot collaboration, where humans and robots work together seamlessly, can leverage human expertise to complement AI capabilities in complex tasks.

- Energy-Efficient Hardware

- The ongoing development of energy-efficient hardware components, including low-power processors and sensors, can extend the operational time of AI-powered robots.

- Cost Reduction

- As AI and robotic technologies mature and become more mainstream, economies of scale may lead to reduced costs, making AI integration more affordable.

The integration of AI into robotics is a complex endeavor characterized by numerous challenges and limitations. Addressing these challenges and limitations necessitates interdisciplinary efforts, including advancements in AI research, hardware development, safety standards, ethics, and collaboration between humans and robots. These concerted efforts will ultimately result in the creation of more capable, versatile, and safe AI-powered robotic systems across numerous industries and applications.”

Future Trends

1. AI-Powered Autonomous Systems

- Self-driving Vehicles

- The development of autonomous vehicles, including cars, trucks, and drones, continues to advance. AI plays a crucial role in enabling these vehicles to navigate complex environments, make real-time decisions, and enhance road safety.

- Autonomous Robots

- AI-powered robots are gaining autonomy in various domains, such as manufacturing, agriculture, and healthcare. These robots can perform tasks with minimal human intervention, improving efficiency and productivity.

2. Human-Robot Interaction

- Social Robots

- Research in social robotics aims to create robots capable of understanding and responding to human emotions and social cues. These robots find applications in healthcare, companionship, and customer service.

- Natural Language Processing (NLP)

- Advances in NLP enable more natural and intuitive communication between humans and AI systems. Chatbots and virtual assistants are becoming increasingly sophisticated in understanding and generating human language.

3. AI in Healthcare

- Medical Imaging

- AI is revolutionizing medical imaging by improving the accuracy and efficiency of diagnosing diseases like cancer. AI-powered systems can analyze medical images, detect abnormalities, and assist medical professionals in making diagnoses.

- Drug Discovery

- AI is accelerating drug discovery processes by analyzing vast datasets, simulating molecular interactions, and identifying potential drug candidates. This has the potential to revolutionize pharmaceutical research.

5. Ethics and Bias in AI

- AI Ethics

- With AI playing an increasingly significant role in decision-making, ethics in AI are gaining prominence. Researchers are working on frameworks and guidelines to ensure fairness, transparency, and accountability in AI systems.

- Bias Mitigation

- Efforts are ongoing to identify and mitigate bias in AI algorithms, especially in applications like hiring, lending, and criminal justice, where biased AI can have significant societal implications.

5. AI in Education

- Personalized Learning

- AI is being used to tailor educational content and assessments to individual students, enhancing their learning experiences.

- Remote Learning Support

- AI-driven tools are helping educators and students adapt to remote and online learning environments.

Autonomous Robots

Autonomous robots, equipped with sensors, software, and hardware that enable them to perceive their environment, make decisions, and take actions independently, are capable of operating and performing tasks without continuous human intervention.

The significance of autonomous robots in various industries stems from their ability to enhance efficiency, safety, and productivity. In manufacturing, they automate repetitive tasks, leading to increased production rates and reduced labor costs. In healthcare, they assist with patient care and perform delicate surgeries with precision. In logistics, autonomous robots handle tasks like warehouse automation and package delivery. In agriculture, they automate the planting, harvesting, and monitoring of crops. Furthermore, autonomous robots play a vital role in space exploration, search and rescue missions, and even transportation via self-driving cars.

- Sensors

- Autonomous robots are equipped with a variety of sensors, including cameras, LiDAR (Light Detection and Ranging), ultrasonic sensors, gyroscopes, and accelerometers. These sensors provide the robot with real-time data about its surroundings. For example, cameras capture visual information, LiDAR measures distances by emitting laser beams, and accelerometers detect changes in motion. Combining data from these sensors allows the robot to create a detailed understanding of its environment.

- Perception

- Perception in autonomous robots involves processing the sensor data to make sense of the world. This includes tasks such as object recognition, where the robot identifies and categorizes objects, and scene understanding, where it interprets the overall context. Mapping involves creating a representation of the environment, which can be essential for navigation.

- Decision-Making

- Once the robot has perceived its environment, it uses this information to make decisions. These decisions can range from basic actions like avoiding obstacles to complex tasks such as planning a path or determining the best way to manipulate an object. Decision-making in robots can be rule-based, where pre-programmed instructions dictate actions, or more advanced, relying on machine learning algorithms to adapt to dynamic situations.

- Actuation

- Actuation involves executing the decisions made by the robot. The robot has actuators, which are mechanisms that allow it to physically interact with its environment. These can include motors for movement, robotic arms for manipulation, grippers for picking up objects, and wheels for mobility. Actuators translate the robot’s digital decisions into physical actions.

- Autonomous Navigation

- Autonomous navigation is a fundamental capability of autonomous robots. It involves the robot planning its path, avoiding obstacles, and adapting to changes in its environment without human intervention. Algorithms for navigation can range from basic obstacle avoidance to sophisticated path planning using techniques like SLAM (Simultaneous Localization and Mapping).

- Learning and Adaptation

- Autonomous robots can learn and adapt over time. This is a key feature that distinguishes them from purely rule-based systems. Machine learning algorithms, including reinforcement learning and deep learning, enable robots to learn from their experiences and improve their performance. For example, a robot can learn from trial and error to navigate a complex environment more efficiently.

Machine Learning in Robotics

In the field of robotics, machine learning involves applying machine learning techniques to enable robots to learn from data and improve their performance. This process entails training robots to make decisions, recognize patterns, and adapt to changing environments based on the information they gather from sensors and other sources.

The significance of machine learning in robotics lies in its ability to enhance a robot’s autonomy, allowing it to independently handle complex tasks. Machine learning enables robots to acquire new skills, respond to unexpected situations, and continually refine their behavior. This technology has applications in various domains, including autonomous navigation, object recognition, natural language understanding, and real-time decision-making, thereby making robots more versatile and capable in a wide range of scenarios.

- Training and Data

- Machine learning models require training data, which is a set of examples used to teach the model how to make predictions or decisions. In the context of robotics, training data can come from various sources, including sensors, cameras, or demonstrations by human operators. For instance, to train a robot to recognize objects, it needs a dataset of images with labeled objects.

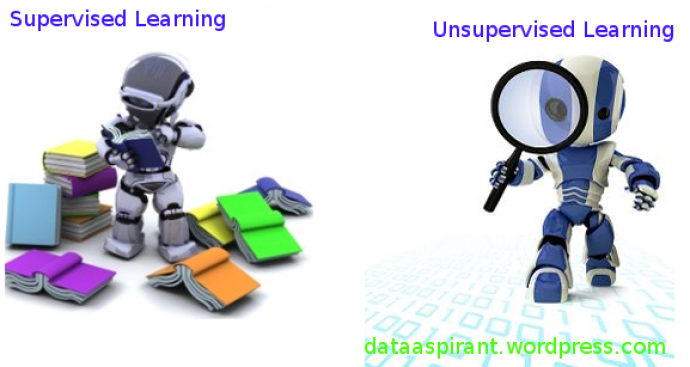

- Supervised Learning

- In supervised learning, the robot is provided with labeled training data, meaning each data point has a corresponding correct answer. The model learns to map input data to output actions based on these labels. For example, a robot can be trained to recognize different types of fruit from labeled images.

- Unsupervised Learning

- Unsupervised learning involves the robot discovering patterns and relationships in data without explicit labels. This can be useful for tasks like clustering similar objects or reducing the dimensionality of complex data, which can aid in navigation or perception tasks.

- Reinforcement Learning

- Reinforcement learning is a paradigm where the robot learns by interacting with its environment. It receives rewards or penalties based on its actions and uses these signals to optimize its behavior. This approach is commonly used for training robots to perform tasks like game playing, robotic control, or autonomous navigation.

- Deep Learning

- Deep learning is a subset of machine learning that utilizes artificial neural networks with multiple layers (deep neural networks). Convolutional neural networks (CNNs) are used for tasks involving images, while recurrent neural networks (RNNs) are suitable for sequential data, such as natural language processing. Deep learning has achieved remarkable success in various robotics applications, including image recognition, object detection, and natural language understanding.

- Real-Time Decision-Making

- Machine learning models can be deployed on robots to make real-time decisions based on sensor data. For instance, a robot equipped with a deep learning model can recognize and respond to obstacles or other objects in its path while navigating autonomously. This real-time adaptability is crucial for safe and efficient robot operation.

- Adaptation and Generalization

- Machine learning enables robots to adapt to new situations and generalize from their training data. This adaptability allows robots to perform effectively in dynamic and unstructured environments, making them valuable in applications that require flexibility and the ability to handle a wide range of scenarios.

Autonomous robots leverage a combination of sensors, perception, decision-making, and actuation to operate independently. Machine learning is a powerful tool that allows robots to learn from data, adapt to new situations, and enhance their capabilities, making them more versatile and efficient in various applications and domains.

How Machine Learning is Utilize in Enabling Autonomy in Robots

Machine learning plays a pivotal role in enabling autonomy in robots by equipping them with the ability to operate independently, adapt to their environment, and make informed decisions. Let’s delve deeper into how experts utilize machine learning for this purpose:

- Data Gathering and Sensing

- Autonomous robots, depending on their application, equip themselves with various sensors such as cameras, LiDAR, ultrasonic sensors, and more. These sensors continuously collect data from the robot’s surroundings. Experts employ machine learning algorithms to process and interpret this data. For instance, they utilize computer vision algorithms to identify objects in camera images and employ LiDAR data for 3D mapping and obstacle detection.

- Perception and Environment Understanding

- Machine learning empowers robots to perceive and understand their environment. Through supervised learning, experts train robots to recognize objects, people, and obstacles in their surroundings. Unsupervised learning techniques help them discover patterns and relationships in the data, enabling tasks like clustering similar objects or identifying anomalies.

- Navigation and Path Planning

- Autonomous robots, in their quest for safe and efficient navigation, commonly undergo training in reinforcement learning and deep learning. These algorithms enable robots to learn optimal paths, avoid obstacles, and adapt their routes based on real-time data, all while optimizing for factors like time, energy efficiency, or safety.

- Real-Time Decision-Making

- Experts use machine learning models, particularly reinforcement learning, for making real-time decisions. Robots receive feedback from their actions in the form of rewards or penalties, allowing them to learn which actions lead to desirable outcomes. This becomes crucial for tasks such as controlling a robot’s movements, adjusting its behavior to unexpected situations, or making split-second decisions to avoid collisions.

- Adaptation to Changing Environments

- Machine learning algorithms enable robots to adapt to dynamic and unpredictable environments by learning from previous experiences and applying that knowledge to new situations. For example, a robot tasked with floor cleaning can learn to adapt its cleaning pattern based on room layout or the presence of obstacles.

- Human-Robot Interaction

- In scenarios involving human-robot interaction, experts harness natural language processing (NLP) and sentiment analysis to understand and respond to human commands and emotions. This user-friendly and versatile approach enables robots to collaborate effectively with humans.

- Continuous Improvement

- Machine learning empowers robots to continuously enhance their performance over time. As robots learn from new data and experiences, they become more proficient at their tasks and capable of handling a broader range of scenarios. This adaptability ensures that robots remain effective even as their operating environment changes.

- Safety and Reliability

- Machine learning is instrumental in enhancing the safety and reliability of autonomous robots. For instance, predictive maintenance models anticipate when robot components are likely to fail, mitigating the risk of unexpected breakdowns and increasing robot uptime.

Experts utilize machine learning as a foundational technology to empower robots for autonomous operation and intelligent decision-making in complex and dynamic environments. Machine learning enables robots to effectively perceive, understand, and interact with their surroundings. As machine learning continues to advance, its role becomes increasingly critical in enhancing the autonomy, adaptability, and capabilities of robots across various industries and applications.

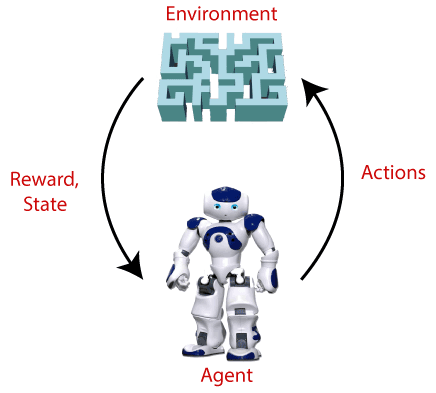

Reinforcement Learning (RL)

In reinforcement learning, an agent learns to make sequences of decisions in an environment to maximize a reward signal. It finds application in scenarios where an agent interacts with its surroundings and learns from the consequences of its actions.

Applications of Reinforcement Learning

- Game Playing

- Complex games like chess, Go, and video games have seen significant success with reinforcement learning. For example, AlphaGo, a deep reinforcement learning algorithm, defeated world champion Go players.

- Robotics

- RL is applied to teach robots tasks such as walking, flying, or grasping objects. Robots learn through trial and error, adapting their actions to maximize rewards and improve their skills over time.

- Autonomous Vehicles

- Self-driving cars employ RL to learn optimal driving strategies based on real-world data and feedback. They adapt to changing traffic conditions for safe navigation.

- Recommendation Systems

- Reinforcement learning is used in recommendation systems, where the algorithm learns to suggest items, content, or actions that maximize user engagement and satisfaction.

- Healthcare: RL can optimize treatment plans and drug dosage by considering patient responses and adjusting treatments for long-term well-being.

Supervised Learning

Supervised learning involves training a model on labeled data, enabling it to map input data to known output labels. This approach is used for tasks requiring predictions or data classification based on examples provided during training.

Applications of Supervised Learning

- Image Classification

- Supervised learning is commonly applied to image classification tasks, where models identify objects or features in images, such as detecting tumors in medical images or classifying objects in photos.

- Natural Language Processing (NLP)

- Supervised learning is used in NLP tasks like sentiment analysis, text classification, and machine translation. Models learn to understand and generate human language using labeled text data.

- Speech Recognition

- In speech recognition, supervised learning converts spoken language into text, used in applications like voice assistants and transcription services.

- Fraud Detection

- Supervised learning is employed in fraud detection systems to identify fraudulent transactions by learning from historical data containing legitimate and fraudulent examples.

- Recommendation Systems

- Alongside RL, supervised learning contributes to recommendation systems. Models learn user preferences from past interactions to suggest relevant products or content.

Unsupervised Learning

Unsupervised learning trains models on unlabeled data to discover patterns, structures, or groupings within the data. This approach is used when no predefined output exists, and the model seeks hidden insights.

Applications of Unsupervised Learning

- Clustering

- Unsupervised learning clusters similar data points together, grouping, for instance, customers with similar purchasing behavior for targeted marketing campaigns.

- Dimensionality Reduction

- Techniques like Principal Component Analysis (PCA) in unsupervised learning reduce data dimensionality while preserving essential features, aiding data visualization, and simplifying subsequent modeling.

- Anomaly Detection

- Unsupervised learning identifies data anomalies or outliers, valuable for fraud detection, network security, and quality control.

- Generative Models

- Unsupervised learning trains generative models such as Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs) for tasks like image generation, style transfer, and data synthesis.

- Natural Language Processing

- Unsupervised learning reveals word embeddings and semantic relationships in text data, assisting tasks like document clustering and topic modeling.

Reinforcement learning, supervised learning, and unsupervised learning find diverse and extensive applications across various domains, including gaming, robotics, healthcare, recommendation systems, image processing, and natural language understanding. The choice of the learning paradigm depends on the specific problem, data availability, and the desired outcome.

Responsibility of Developers and Engineers in Ensuring Ethical AI in Robotics.

- Establish Ethical Frameworks and Guidelines

- Developers and engineers create ethical frameworks and guidelines for AI in robotics. For example, they define rules that ensure robots respect individuals’ privacy when collecting data, such as not recording private conversations without consent.

- Mitigate Bias

- Engineers actively work to identify and mitigate biases in AI algorithms. For instance, they ensure that a facial recognition robot can accurately identify individuals of all ethnic backgrounds, avoiding racial bias in its recognition.

- Prioritize Transparency and Explainability

- Developers design models and algorithms that provide interpretable results. For example, they ensure that a medical diagnosis robot explains how it arrived at a particular diagnosis based on patient data.

- Establish Accountability and Liability

- Engineers clarify lines of accountability and liability for AI-powered robots. For instance, they define that in the case of a self-driving car accident, the car manufacturer is responsible for any damages.

- Protect Privacy

- Developers implement data protection measures to safeguard privacy. For instance, a home security robot is programmed not to share video footage with third parties without the homeowner’s explicit consent.

- Ensure Safety Measures

- Engineers prioritize safety through rigorous testing. For example, they programmed a drone to have an emergency landing feature to prevent accidents and damage in case of technical failures.

- Continuous Monitoring and Evaluation

- Developers set up mechanisms for ongoing monitoring and evaluation. For instance, they regularly update the software of a delivery robot to improve its navigation based on real-world experiences.

- Compliance with Regulations

- Engineers adhere to relevant regulations and standards. For example, they ensure that a healthcare robot complies with medical data privacy regulations to protect patient information.

- Ethical Decision-Making

- Developers program robots with ethical decision-making capabilities. For example, they instruct an AI tutor to refuse requests from students if the content is inappropriate or unethical.

- Public Engagement and Education

- Engineers engage with the public and educate them about AI. For instance, they create informational videos to explain how a language translation robot works and its potential limitations.

- Consider Ethical Implications in Design

- Developers incorporate ethical considerations into the design phase. For example, they ensure that a social robot designed for eldercare respects the dignity and independence of the elderly.

- Responsible Deployment

- Engineers ensure responsible deployment of AI-powered robots. For example, they decided not to deploy a surveillance robot in a residential area to protect residents’ privacy.

- Ethical Testing and Validation

- Developers integrate ethical testing into the development lifecycle. For instance, they conduct tests to ensure that a social interaction robot behaves ethically when interacting with children.

Potential Areas for Future Research and Development

Certainly! Let’s actively explore each potential area for future research and development in robotics and artificial intelligence (AI) in greater detail:

- Advancing Human-Robot Collaboration

- In this area, researchers aim to enhance interactions between humans and robots, making them more intuitive and efficient. Researchers could actively develop robots capable of understanding and responding to natural language commands and gestures, actively enhancing their adaptability and usability. For instance, researchers could actively pursue creating robots for elderly care that actively understand spoken instructions and provide assistance accordingly.

- Promoting Ethical AI and Robot Behavior

- As robots become more autonomous, ethical considerations actively come into play. Future research actively involves designing AI algorithms and mechanisms that enable robots to make ethically sound decisions. For example, researchers might actively work on robots that can assess the moral implications of their actions and act accordingly, especially in situations involving medical care or emergency response.

- Advancing Autonomous Vehicles

- Research in this area actively concentrates on pushing the boundaries of self-driving cars, drones, and other autonomous transportation systems. Future developments could actively include making autonomous vehicles more resilient to extreme weather conditions, improving their real-time decision-making capabilities to actively handle complex traffic situations, and enhancing energy efficiency for longer-lasting battery life.

- Revolutionizing Robotics in Healthcare

- Healthcare robots are already playing a role in surgeries and patient care. Future research might actively involve creating smaller, more precise medical robots that can navigate delicate procedures with greater accuracy. The active integration of AI for diagnosis and treatment optimization is another promising avenue of exploration.

- Transforming Agricultural Robotics

- The agricultural sector actively benefits from automation to increase crop yields and reduce labor costs. Future research may actively focus on developing robots that can independently analyze soil quality and adapt planting and harvesting techniques accordingly. These robots could use AI to optimize planting patterns and actively reduce the use of pesticides.

- Enhancing Swarm Robotics

- Research in this field actively aims to improve the coordination and efficiency of robotic swarms. Future developments could actively lead to more advanced algorithms for swarm behavior, enabling robots to collaborate seamlessly in complex environments. For example, swarm robots could be actively designed to perform large-scale environmental monitoring by coordinating their actions to cover a wide area efficiently.

- Advancing Robotic Exoskeletons

- Exoskeletons actively hold promise in enhancing mobility for individuals with physical disabilities and aiding workers in physically demanding jobs. Future research might actively focus on creating exoskeletons that are more lightweight and affordable, allowing broader accessibility. Customizable exoskeletons designed for various tasks could also be actively developed.

- Improving Humanoid Robot

- These robots actively aim to mimic human appearance and behavior. Future research may involve actively improving the agility and dexterity of humanoid robots. For example, researchers could actively work on robots that can perform tasks requiring fine motor skills, such as cooking or assembling delicate electronics.

- Enabling Natural Language Processing (NLP) for Robots

- Integrating NLP into robots actively allows them to understand and generate human language. Future developments could actively include robots capable of having more natural and context-aware conversations with humans. For instance, advanced NLP-powered robots could actively assist in customer service by providing detailed responses to complex queries.

- Enhancing AI Safety and Security

- With the increasing adoption of AI-driven robotics, ensuring their safety and security is paramount. Future research might actively focus on developing robust mechanisms to protect AI-powered robots from cyber threats, ensuring they can operate securely in a connected world. This could actively involve exploring techniques for intrusion detection and response within robotic systems.

- Advancing Environmental Robotics

- Robots designed for environmental applications, such as monitoring ecosystems or responding to natural disasters, are vital. Future research may actively involve developing more rugged and adaptable environmental robots that can withstand harsh conditions and gather data more effectively. These robots could actively use AI to make real-time decisions in challenging situations.

- Exploring Quantum Robotics

- Quantum computing actively holds the potential to revolutionize robotics by solving complex optimization problems more efficiently. Future research in this area might actively focus on developing quantum algorithms that can enhance the capabilities of robots, particularly in areas like logistics and resource allocation.

- Elevating Social Robotics

- These robots actively aim to provide companionship and assistance to humans in various contexts. Future research could actively involve improving the emotional intelligence and adaptability of social robots. For example, researchers might actively work on robots capable of recognizing and responding to users’ emotional states, providing companionship and support in healthcare settings or eldercare.

Each of these research areas actively presents unique opportunities to advance the capabilities and applications of robotics and AI. Researchers and engineers actively play a vital role in driving innovation in these domains, addressing societal needs, and ensuring that AI-driven robots are ethical, safe, and effective in various contexts.

Conclusion

The fusion of Artificial Intelligence (AI) and robotics heralds a new era of innovation and automation. While challenges like computational complexity, hardware limitations, and ethical concerns persist, the relentless pursuit of solutions and advancements in AI technology are propelling us toward a future where autonomous robots are an integral part of our daily lives. Machine learning, with its ability to enable robots to learn, adapt, and make decisions, lies at the heart of this transformation. It empowers robots to perceive, understand, and interact with their surroundings, fostering human-robot collaboration in various domains, from healthcare to agriculture, and from autonomous vehicles to social companionship.

The responsibility of developers and engineers in ensuring ethical AI in robotics cannot be overstated. They play a pivotal role in establishing ethical frameworks, mitigating biases, ensuring transparency, and prioritizing safety and reliability. By considering the ethical implications throughout the development lifecycle, they pave the way for responsible AI-powered robots that respect privacy, fairness, and accountability. Looking to the future, the possibilities are boundless. Advancements in human-robot collaboration, ethical AI decision-making, and autonomous vehicles are on the horizon. Robotics in healthcare, agriculture, and environmental monitoring promises to revolutionize these sectors. Quantum robotics, social robots with emotional intelligence, and AI-driven natural language understanding hold the potential to reshape how we interact with technology.